Tracing Apps and Digital Divide (3): Supplementary Measures, User Involvement, and the Ethical Responsibility of Designers

From the designers’ own perspective, ensuring equality, paying attention to diverse concerns from different groups, and preventing potentially adverse social effects should always be a priority in the designing process.

Written by Jack Linzhou Xing

Published on 18/05/2021

In the first and second articles in this series, we have discussed the different designs of the tracing apps in mainland China, Hong Kong, and Singapore, and analyzed the path dependency behind and unintended consequences of their designs. It is safe to argue that the user experience of a certain group of people with more difficulties interacting with digital devices is not even a concern of the design in any case. Even regarding the relatively user-friendly design in the Hong Kong and Singaporean case, the user-friendliness is merely an unintended consequence of the design principles that were initially aimed at solving the privacy problem. Therefore, it is not surprising that all kinds of the digital divide – in this case, mainly between relatively young people and the elderly – take place in the usage scenarios of different tracing apps.

Supplementing Human to Technology

Then how to deal with all the difficulties encountered by older people in everyday life? By redesigning everything? Impossible. Tracing apps and the systems behind them possess the dual qualities of network products and infrastructures. As a network product, it has a network effect. With more users in the network, users can get more utility in the network, and users tend to prefer this particular network to others. Therefore, abandoning the existing system and designing another can be costly from the government’s perspective and harmful to the usefulness of the tracing system. As an infrastructure, once the system is built, radical changes in it can in a short period seriously influence its operation and people’s usage of it. All these potential problems, which we can summarize as the switching cost, render redesigning unfeasible.

It seems that the only way out is to use human measures as a supplement. In fact, many places are doing that. In the case of mainland China, many public transportation facilities set up “channels for people without health QR codes (无健康码通道)”, through which elderly people, people without smartphones, people whose smartphones are out of battery can write down their ID numbers, report their home addresses, take the body temperature, and go on public transportations. Places such as Shanghai also allow citizens to use health proof issued by authorized hospitals or community medical centers in lieu of health QR codes, with the proof valid for 14 days.

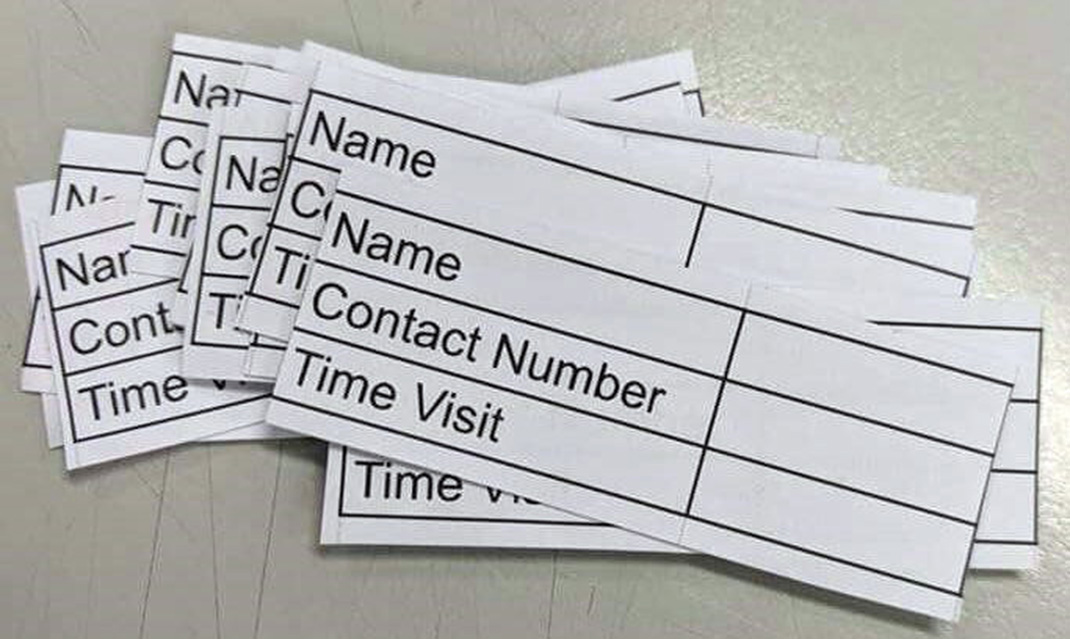

Hong Kong has similar arrangements. For people who are not willing or are unable to use LeaveHomeSafe, operators of public places such as restaurants, movie theaters, and sports centers are regulated to provide small pieces of paper with registration forms.[1] Citizens who choose not to use LeaveHomeSafe can write down their phone numbers and drop the piece of paper into a small box. The box will only be opened when there are positive cases or close contacts who have once entered the public place. Such a setting is initially designed for those who do not trust the government and suspect that the government will use LeaveHomeSafe to access the information in their smartphones. However, such a measure can also help mitigate the digital divide problem, again in an unintended way.

These strategies of using human labor to mitigate the problems caused by digital technology again reaffirm the critical lesson that technology can never solve all the problems without involving the social.

User Involvement in Design

Supplementary measures dealing with the problems after the widespread application of a certain infrastructural system seem to be the only feasible way. However, what could we do if we need to design a similar system again next time? Are there ways for us to fundamentally minimize the potential problem of the digital divide in the first place? This obviously needs to be approached in design. One strategy is to really involve users in the design process.

By involving users, I do not mean to superficially “concern the users’ needs”, as claimed by virtually every designer and company. According to Steve Woolgar, this can be called “configuring”, which is the process of “defining the identity of putative users, and setting constraints upon their likely future actions”.[2] The result of this process, he argues, is “a machine that encourages only specific forms of access and use”.[3] In this sense, however, the designers care about the users’ needs, their concerns are putative rather than really from users’ voices. Correspondingly, it is not surprising that many products with the designer claiming so have “unintended consequences” regarding user experience.

Instead, the appropriate execution of such a strategy is to invite various users in different profiles to really participate in the designing process by presenting their needs and concerns and communicating them to the designers. Such a democratic process requires lots of real, person-to-person negotiations and interactions, from the initiation of the design project to all the testing phases. For example, in the design of tracing apps, it is supposed that older people, disabled people, people without smartphones should all have representatives in the design meetings and express their concerns about the potential inconvenience and shortcomings of each design.

Obviously, this can be difficult – sometimes, this can be even out of concern. Taking the development of Health QR code in Hangzhou, China as an example, when the incoming passenger flow would start in one week, and when the tracing system was supposed to be developed within several days, it seemed that the only way out was to invoke existing digital infrastructures and develop whatever applicable for “the majority of the people”. After all, involving too many parties in a negotiation process can be time-consuming and organizationally tricky. In this sense, the timing issue gets in the way of the more involving designing strategy.

However, even in other cases which do not involve the timing issue, are governments, companies, and other groups of designers willing to adopt this strategy? Not necessarily. They are often guided by the profit-oriented logic, which requires the product to be launched fast as long as its product features can be appeal to “the majority of the people”. Therefore, for companies whose primary targets are not groups with difficulty using digital devices, such a strategy may again be regarded as irrelevant and time-consuming. Changing this mindset is definitely not easy, especially given that some companies tend to keep a younger workforce structure even at the organizational level. There are occasionally scandals about big tech companies in China, such as Huawei, planning to “structurally optimize” their workforce by removing many IT developers over 35 years old. How can a company possibly develop user-friendly products for older people when the major force of designers is younger than 35?

Ethical Responsibility of Designers

So what is the responsibility of designers – broadly defined, including companies and government agencies that design and provide digital products? Something from the discipline of science, technology, and society (STS) might be useful for users and designers to think of. It has been one of the central arguments in STS and design literature that technological design is about “doing ethics by other means” (Verbeek 2006: 361).[4] In other words, designers materialize morality, and there should be, at least at the normative level, no claim of innocence on consequences of an artifact or a technological system (Parvin and Pallock 2020).[5] Following this argument, when designing a certain artifact or technological system, involving different parties and treating diverse concerns can be regarded as the ethical responsibility of the designer. This does not mean that designers always need to take the legal liability or bear the moral criticism of society. Instead, it means that, from the designers’ own perspective, ensuring equality, paying attention to diverse concerns from different groups, and preventing potentially adverse social effects should always be a priority in the designing process.

Footnotes

- Author’s note: starting from 9 December, 2021, all customers/ users entering premises regulated under the Prevention & Control of Disease (Cap. 599G) e.g. restaurants, cinemas and bars must scan the LeaveHomeSafe venue QR code. The option of filling in paper forms is banned except for people aged 65 or above and aged 15 or below, people with disability and those recognised by the Government or organisations authorised by the Government. See https://www.news.gov.hk/eng/2021/12/20211206/20211206_175552_090.html.

- Woolgar, Steve. 1990. “Configuring the user: the case of usability trials.” The Sociological Review 38, no. 1_suppl: 59.

- Woolgar, Steve. 1990. “Configuring the user: the case of usability trials.” The Sociological Review 38, no. 1_suppl: 89.

- Verbeek, Peter-Paul. 2006. “Materializing morality: design ethics and technological mediation.” Science, Technology, & Human Values, 31(3), 361-380.

- Parvin, Nassim, and Anne Pollock. 2020. “Unintended by design: On the political uses of “unintended consequences”.” Engaging Science, Technology, and Society 6: 320-327.